Starting with Waaila

You decided to finally start validating your data in Google Analytics and already connected them into the Waaila app. Yet how you should start? Here we explore a few tests from the Waaila – Google Analytics Starting Kit.

Published: Feb 8th 2021 | 10 min read

Table of contents

Data quality validation is not a question of IF rather than WHEN and HOW. With the Waaila application, you can easily check your data in Google Analytics to reveal defects and errors. You can choose from many provided tests or create your own. The application is an AI-based advanced algorithm to detect anomalies and issues in the data from your Analytics platform (Google Analytics or Piano Analytics).

In order to get the most of the application, even if you have no experience with data validation, you can use prepared sets of tests available for free in Marketplace inside the application. We recommend starting with the Waaila – GA Starting Kit containing a set of predesigned basic tests that are a great initial point toward higher quality data.

Setup a dataset and use the Test Editor

In this section, we explain how to create a dataset from a test collection in Marketplace and provide a brief description of the Test Editor where individual tests can be edited and run. To start using the Waaila – GA Starting Kit, go to Waaila Marketplace, select the Waaila – GA Starting Kit test set, open it and click in “Add to library” button. When the test set copies to your library of tests, click on “Use in dataset” button.

How to create a new dataset

- Select an existing depot where the dataset should be located or create a new one by selecting the option "Create a new depot".

- Next, select the option to "Create a new dataset" (tests can also be added from the library to an existing dataset in this step if you already have some existing datasets).

- Select the provider account - if you are using your main account with which you logged in to Waaila, it is offered in the selection field; if you need to connect a new account, follow authentication below the field.

- From listed data sources, select an account, property, and view that you want to use for this testing.

- Set a custom name or leave the existing name.

- Optionally add a description.

- Confirm the configuration by clicking "Save".

Upon creating a dataset, the dataset opens, and you can run and edit the tests. To run all tests, you can use the dedicated button above the list of tests, called "Run all tests". However, you can also open the Test Editor by clicking on a test and there you can examine the test in detail, run the single test directly or edit its content.

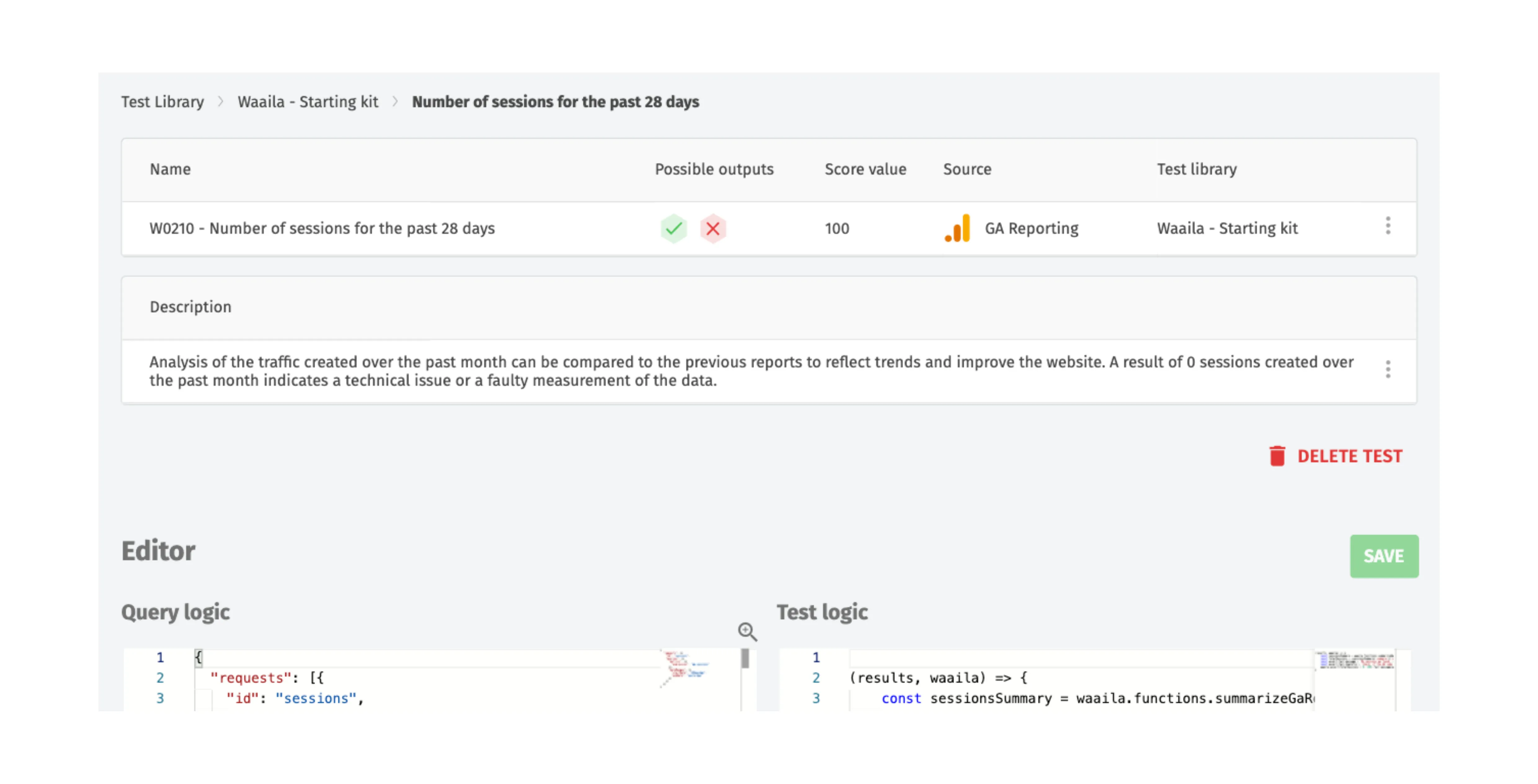

The Test Editor page is divided into several parts. On the top, below the navigation line, there is the summarized information about the test, including its name and description. Below the information is a multi-functional button, allowing you to run the test. The main parts of the test are the Query logic and the Test logic where the Query logic specifies which data you want to load for your test and the Test logic manipulates the data, typically checks some condition,s and outputs the result. When you evaluate the test in the Test Editor, the result appears above the Query logic and Test logic.

How to run and interpret tests in Waaila – GA Starting Kit

In the test collection Waaila – GA Starting Kit there are five basic tests that can help you start with your data quality validation in Waaila. In the following subsections, we explain each test individually and show you how to interpret its result.

TEST 1 – Number of sessions for the past 28 days (W0210)

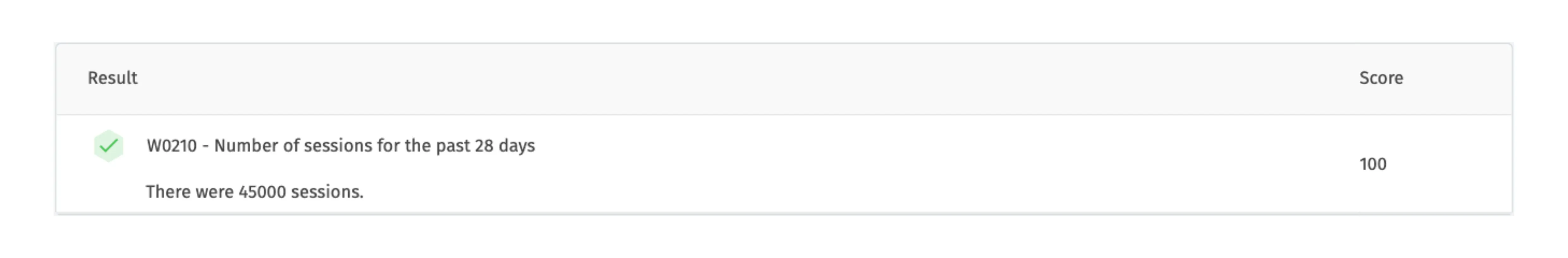

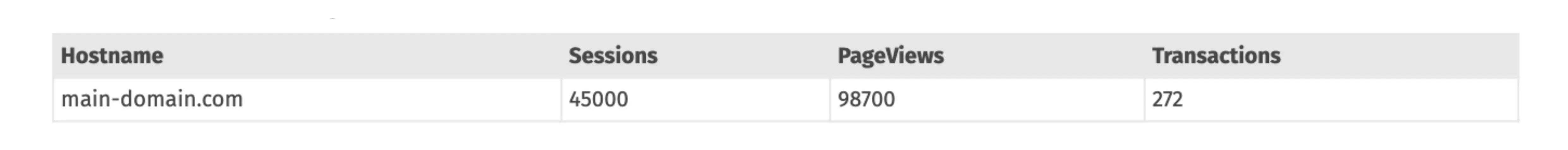

The first test offers a simple verification that there are indeed some data measured in your Google Analytics. It extracts data about the number of sessions in the last 28 days (up to and including yesterday’s data). The test passes if there is at least one session measured in that period and therefore it serves as an initial check for starting with data quality evaluation. Apart from a pass or fail the test also outputs the actual number of sessions if it is positive. On the included screenshot of the Waaila app, you can see the result for a site with 45 thousand sessions in the past 4 weeks.

The test could be easily modified to compare the number of sessions to a fixed number (by changing the threshold in the assert function in the Test logic) or to check the measurement for a different period (by editing the date range in the Query logic). To verify that there has not been a blackout in measurement yesterday, there is an already prepared test Measurement blackout in the test collection Waaila - General Information and Checks.

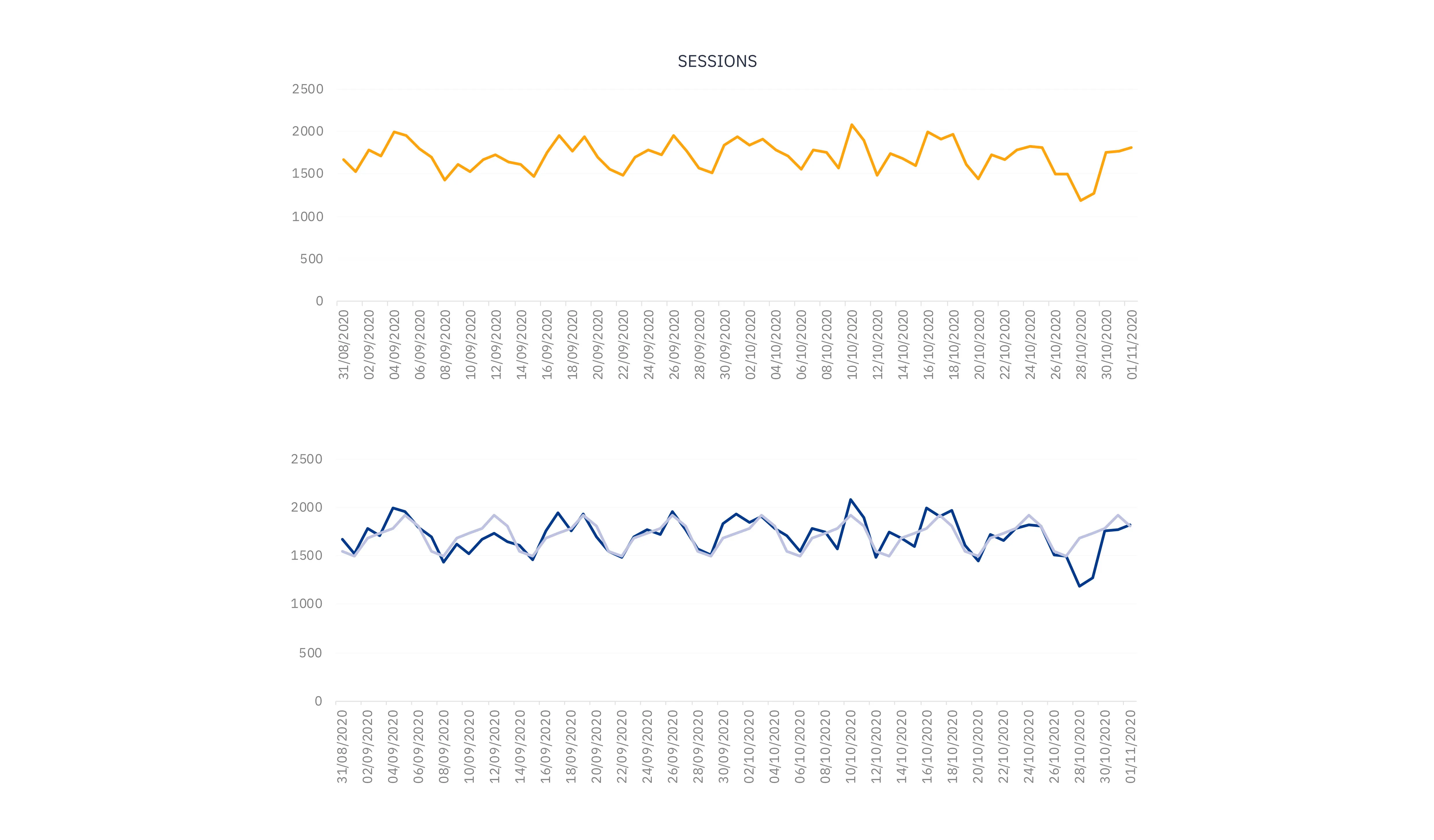

It is possible to extend the test for dynamic comparison by including data from past months and evaluating the current number of sessions with the average number of sessions per 4 weeks in the past. Alternatively, the most sophisticated extension to this test offers our new feature, anomaly detection. This feature allows you to follow the dynamics of your weekly or daily sessions and informs you when the values jump significantly as compared to their trends and cyclical patterns.

The graph below provides an illustration of the data. Within the last week of daily session data, there happened a relatively large downward jump in the daily number of sessions. However, if not compared to the weekly pattern, it could go easily unnoticed. While in this case, this jump could be explained by the Czech state holiday on October 28th, in another case it could be caused by some error in the measurement or in the website functionality and would require further investigation and remediation.

TEST 2 – Hostname overview (W0220)

The next test provides information on all hostnames and their basic measurement statistics (sessions, page views, and transactions) over the past 28 days. This is not a test in the true sense as it does not automatically evaluate any condition. What it does is that it provides information that you need to review and decide what steps to take.

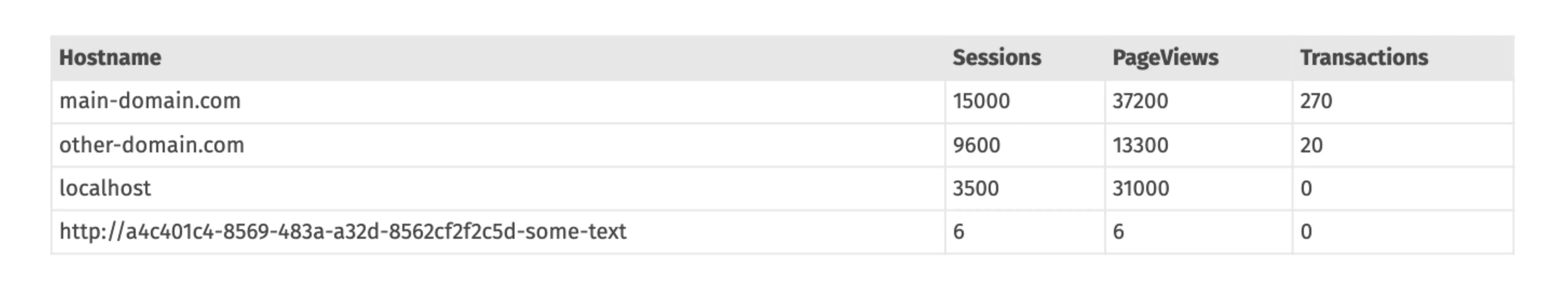

If you have a single-domain website, you should typically find only one line in the table (as illustrated on the included table below). If there is anything surprising in the output, you can dig deeper into it with the help of another prepared test set Waaila - Hostnames (single-domain) dedicated for checking hostname-relate measurement for a single-domain website.

For multi-domain websites, you can analyze your data using the Waaila - Hostnames (multi-domain) test set. You will need to set the list of expected hostnames there to receive the best results. The table below shows an example of a hostname overview for a website with two domains, where the two domains are measured correctly but there are other two hostnames incorrectly included.

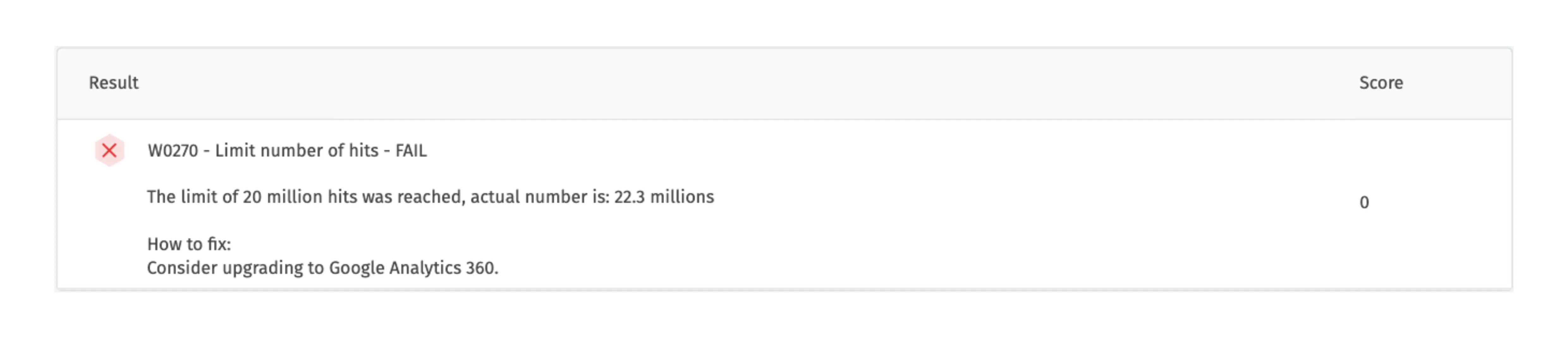

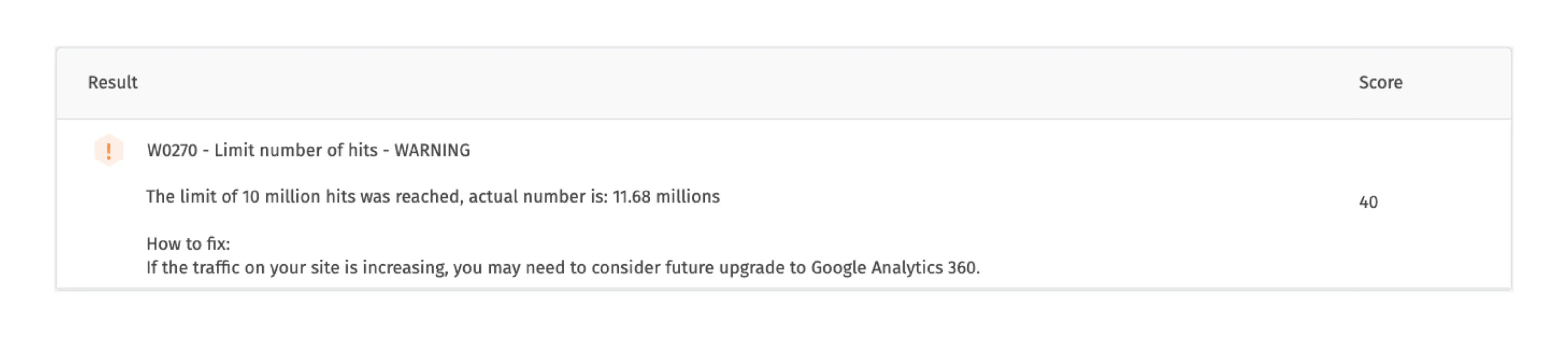

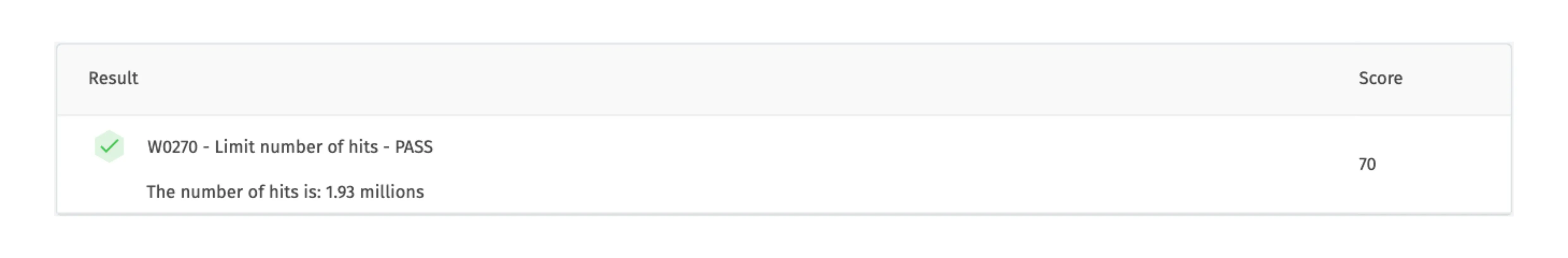

TEST 3 – Limit number of hits (W0270)

The third test is tailored for checking the optimal use of Google Analytics regarding its limits for free use. The free version of Google Analytics has a limit on the number of hits it can collect. Ingesting more than 10 million hits a month per property has an impact on how your data are processed and stored. Also, the measurement will be slow, and larger sampling errors will appear than before. However, if you have a paid version of Google Analytics, you do not need to run this test.

The test consists of two checks and an informative value:

-

if there are more than 20 million hits in the last 28 days (twice the limit for the free version), the test fails

-

when the number of hits reaches the limit of 10 million (but stay below 20 million), the test issues a warning

-

if the test passes, it only reports the number of hits

Based on the results you can decide whether you need to upgrade your Google Analytics version and in case no action needs to be done yet, decide how often you need to run this test to check the threshold.

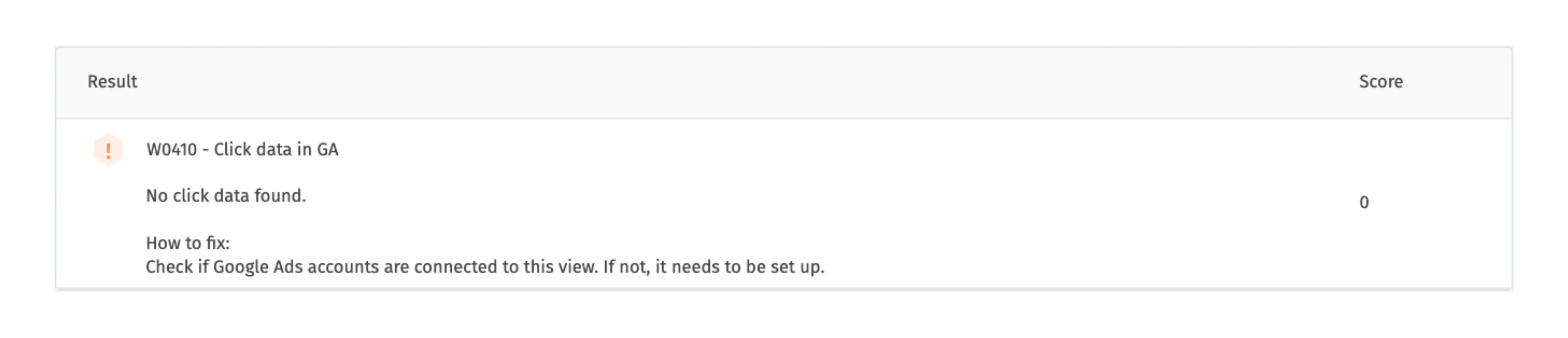

TEST 4 – Click data in GA (W0410)

The following test offers another simple verification that the data that you need is actually measured. In this case, it focuses on the advertisement clicks and determines whether the number of clicks on ads in the past 28 days is above zero. These clicks are evaluated to measure the intensiveness of the usage of the Ads (CPC). If no clicks are recorded in Google Analytics, it can indicate that Google Ads is not connected to Google Analytics, or that a technical issue is occurring within your website.

If no advertisement clicks are measured, the test issues a warning as can be seen in the picture below. As you have seen in the previous test, Waaila uses a warning (compared to a fail) when the problem found is less significant, because in the previous test the number of hits can still grow and be measured. Similarly, Waaila issues a warning, and not fail, in case no advertisement clicks are measured due to the fact that no information on advertisement clicks does not threaten to decrease profit immediately.

Further prepared tests for the investigation of basic measurement can be found mainly in two sets of tests available in the marketplace: Waaila - General Information and Checks and Waaila - Measurement Overview.

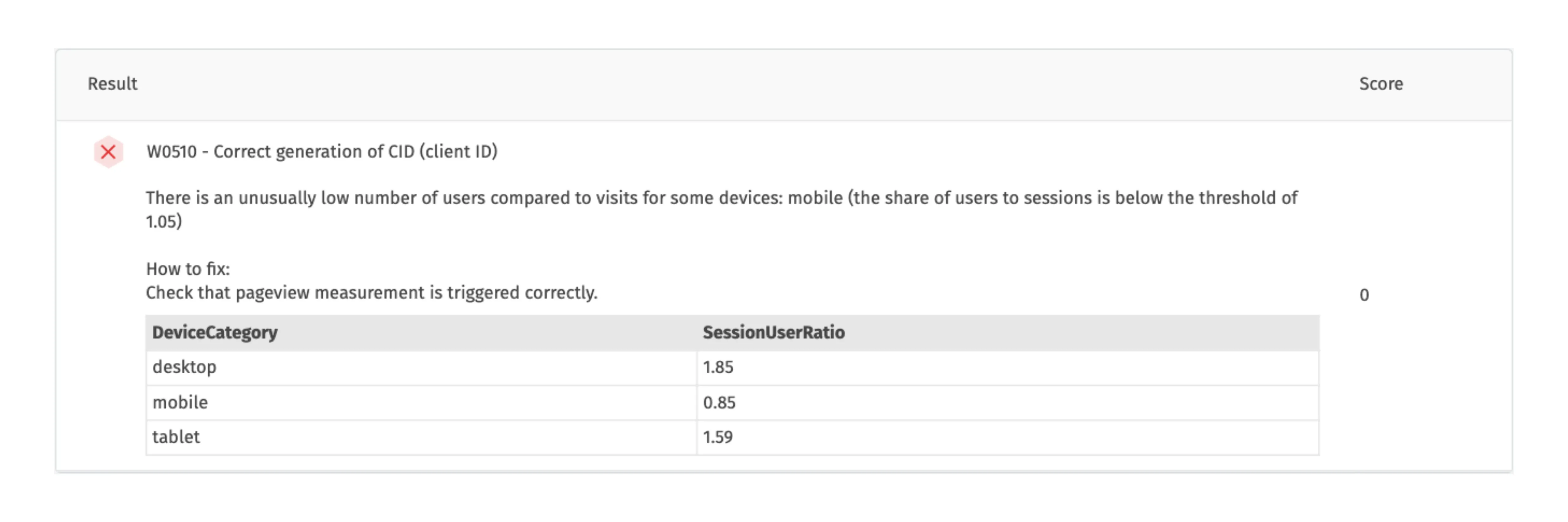

TEST 5 - Correct generation of CID (client ID) (W0510)

The last test in the Waaila – GA Starting Kit checks the generation of client IDs by evaluating the number of users versus the number of sessions. There must be significantly more sessions than users. If it does not hold, it might show a technical problem and will therefore require deeper analysis.

If the ratio of sessions per user decreases below a certain threshold, the test fails. The default threshold used by the test is 1.05, therefore there can be as few as 5 % more sessions than users (e.g., if only 5 % of clients return for a second session and nobody for a third one) before the test fails. The results are presented by the device types so that you can check for which device there has been a problem (in the presented example, there is an issue with measurement for mobile users).

Summary

To summarize, the application Waaila offers you a great way to evaluate your data measurement quality. This article introduces Waaila through focusing on one of its prepared test sets, Waaila – GA Starting Kit, explaining the mechanics of tests and presenting the tests on illustrative examples. With the test explanations we included further recommendations for individual tests or other test sets, so from here, you can directly proceed with your data evaluation in Waaila.

If the prepared tests do not cover all your needs, you can write your own tests, with the help of our extensive documentation.

If you have any questions regarding Waaila, you can contact us via the Waaila website or write directly to the Waaila support team.